1. Intro

Morloc is a strongly-typed functional programming language where functions are imported from foreign languages and unified under a common type system. This language is designed to serve as the foundation for a universal library of functions. Each function in the library has one general type and zero or more implementations. An implementation may be either a function sourced from a foreign language or a composition of such functions. All interop code is generated by the Morloc compiler.

2. Why Morloc?

2.1. Compose functions across languages under a common type system

Morloc allows functions from polyglot libraries to be composed in a simple functional language. The focus isn’t on classic interoperability (e.g., calling Python from C) or serialization (e.g., sending data between applications via protobufs) — though morloc implementations may use these under the hood. Instead, you define types, import implementations, and build complex programs through function composition. The compiler invisibly generates any required interop code.

2.2. Write in your favorite language, share with everyone

Do you want to write in language X but have to write in language Y because everyone in your team does or because your expected users do? Love C for algorithms, R for statistics, but don’t want to write full apps in either? Morloc lets you mix and match, so you can use each language where it shines, with no bindings or boilerplate.

2.3. Run benchmarks and tests across languages

Tired of learning new benchmark and testing suites across all your languages? Is it hard to benchmark similar tools wrapped in applications with varying input formats, input validation costs, or startup overhead? In Morloc, functions with the same general type signature can be swapped in and out for benchmarking and testing. The same test suites and test cases will work across all supported languages because inputs/output of all functions of the same type share equivalent Morloc binary forms, making validation and comparison easy.

2.4. Design universal libraries

With Morloc, we can build abstract libraries using the general types as a logical framework. Then we can import implementations of these functions from one or more of the supported languages and easily test and benchmark them. These libraries are the foundation for an ecosystem where functions may be verified, organized/searched by type, and used to build rigorous programs.

2.5. Make composable and deployable tools

A Morloc module can be compiled directly into a CLI tool with rich subcommands and automatically generated usage statements. These CLI tools can be composed with just a few lines to make custom toolboxes. They can also be compiled as daemons serving over UNIX sockets, TCP or HTTP.

2.6. Make better scientific workflows

Within the scientific programming space, Morloc can serve as a replacement for the brittle application/file paradigm of workflow design. Replace heavy CLI applications with pure function libraries, ad hoc textual file formats with explicit data structures, and workflow specifications with function compositions. See the first Morloc paper for details (here).

3. Getting Started

3.1. Installing Morloc

The easiest way to run Morloc is through containers in a UNIX environment. Linux will work natively. MacOS and Windows are more complicated and I’ll deal with their special cases later on. For Windows, you will need to install through the Windows Subsystem for Linux.

3.1.1. Installing morloc-manager

The morloc-manager utility streamlines the management of Morloc

environments. The binaries can be downloaded directly from GitHub

(here) or you can follow

the script below can be followed to install the latest binary:

$ sys="linux-x86_64" # or "linux-arm64" / "macos"

$ mim_url=$(curl -s https://api.github.com/repos/morloc-project/morloc/releases/latest | grep browser_download_url | grep morloc-manager-${sys} | grep -o 'https[^"]*')

$ curl -Lo morloc-manager "$mim_url"

$ chmod +x morloc-manager

$ mv morloc-manager ~/.local/bin/On macOS you may need to clear the quarantine attribute:

$ xattr -d com.apple.quarantine morloc-managermorloc-manager relies on containers, so you will also need a container

engine. Two are supported: Podman and Docker. I recommend Podman for rootless

local work. If you have both engines installed, you will need to tell

morloc-manager which you are using with the command:

$ morloc-manager setup --engine podman

Engine set to: podmanPodman instructions

Unlike Docker, podman runs rootless by default, so no sudo is required. On

Linux, it also runs natively with no daemons.

On MacOS and Windows (even through WSL), a virtual machine is required. So you

will need to initialize podman as so:

$ podman machine init

$ podman machine startYou can confirm that podman is running by entering

$ podman --version

podman version 5.4.1 # version on my current setupThe morloc-manager utility usage information can be accessed with the -h option:

$ morloc-manager -h

morloc-manager - container lifecycle manager for Morloc

Usage: morloc-manager [OPTIONS] [COMMAND]

Development

setup Configure the default container engine

new Build a new morloc environment

run Run a command in the active environment

rm Remove a morloc environment

ls List morloc environments

info Show configuration and installed environments

select Select an environment

update Rebuild an environment

nuke Remove all morloc environments

Deployment

start Serve an environment over the network

stop Stop a running serve container

logs Stream logs from a running serve container

freeze Export installed state as a frozen artifact

unfreeze Build a portable serve image from frozen state

status List running serve containers

doctor Check environment health and diagnose issues

Options

-v, --verbose Print container commands to stderr before executing

--json Output machine-readable JSON instead of human-readable text

--version Print version and exit

-h, --help Print help (see more with '--help')To get started with Morloc, we will only need subcommands from the Development

section of the usage statement above.

3.1.2. Creating environments

An environment is a named, self-contained Morloc installation: a container image, a data directory for modules and binaries, and optionally a custom Dockerfile layer with extra dependencies.

We can create a new environment and name it base with the command below:

$ morloc-manager new --non-interactive base

Pulling ghcr.io/morloc-project/morloc/morloc-full:latest...

Created environment: base

Initializing morloc (this may take several minutes)...

Environment 'base' is ready.

Activate it with: morloc-manager select baseBy default, the new subcommand pulls the latest Morloc release. There are many

additional options, but this default environment will be sufficient for running

nearly everything in the Morloc docs.

We can check that the new environment is available with ls. You might see

something like this (your environments will differ from mine):

$ morloc-manager ls

Local environments:

base [0.85.0]

edge [0.79.0] (active)Next we should activate the new environment we just created:

$ morloc-manager select base

Selected environment: baseeverthing is set up correctly with info:

$ morloc-manager info

Active: edge

Local engine: podman

System engine: podman

SELinux: not detected

Directories:

Config (local) /home/z/.config/morloc (exists)

Data (local) /home/z/.local/share/morloc (exists)

Config (system) /etc/morloc (exists)

Data (system) /usr/local/share/morloc (not found)

Local environments:

base [0.85.0] (active)

edge [0.79.0]You can get details on a particular environment as well:

$ morloc-manager info base

Name: base

Scope: local

Active: yes

Base image: ghcr.io/morloc-project/morloc/morloc-full:0.85.0

Morloc version: 0.85.0

Engine: podman

SHM size: 512m

Dockerfile: none

Flags: /home/username/.config/morloc/environments/base/env.flags

Data dir: /home/username/.local/share/morloc/environments/base3.1.3. The Morloc shell and first runs

You can run commands inside the environment:

$ morloc-manager run -- morloc --version

0.85.0This confirms that that the morloc compiler is installed and shows its version.

Alternatively you can create an interactive session inside the Morloc container:

$ morloc-manager run --shellYou are now inside a shell in the container. You can check that the Morloc compiler installed in the container is indeed the latest version:

$ morloc --version

0.85.0 # you may have a later versionThe current working directory from your system is mounted. All changes you make in this directory will persist. The Morloc module directory for this environment from your home system is mounted as well, so any Morloc modules you install will persist. That beind the case, let’s install the Morloc standard library:

$ morloc install stdlibThe Morloc stdlib module is a re-exporter of all the Morloc individual

standard library modules. So installing it is a shortcut for explicit

installation.

You are now ready to run almost any code in the docs.

3.2. Setting up IDEs

We are currently working on expanding the editor support for Morloc.

Below are the editors that are supported or under development.

vim

If you are working in vim, you can install Morloc syntax highlighting as follows:

$ mkdir -p ~/.vim/syntax/

$ mkdir -p ~/.vim/ftdetect/

$ curl -o ~/.vim/syntax/loc.vim https://raw.githubusercontent.com/morloc-project/vimmorloc/main/loc.vim

$ echo 'au BufRead,BufNewFile *.loc set filetype=loc' > ~/.vim/ftdetect/loc.vim

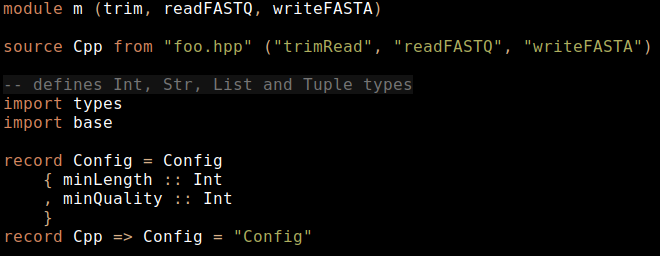

VS Code / VSCodium / Cursor

We have a publicly available "morloc" extension with support for highlighting and snippet expansion.

Zed

This is currently under development, see repo here.

The extension is mostly written, and the required Tree-sitter grammar is written, but there are bugs to be resolved. I’m happy to accept pull requests!

I’ve also written several syntax highlighting and static analysis tools:

Pygmentize

A repo with the Pygmentize parser can be found here. This parser is used to highlight code here in the manual. It can be easily integrated into Python code, e.g., in the Weena discord bot.

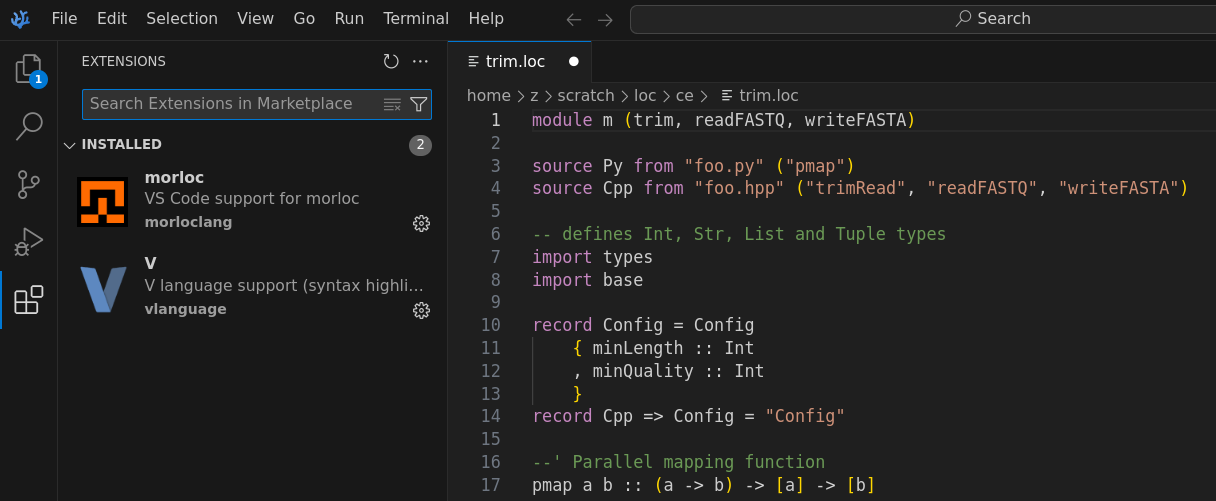

Tree-sitter

Tree-sitter is a program for defining parsers and using them to query languages and add advanced grammatical understanding to editors. These grammars require a complete lexer and parser specification for the language. This grammar is available for Morloc, see repo here. Tree-sitter allows general purpose syntax highlighting (e.g., over the command line) and parses a full concrete syntax tree from the code:

3.3. First Morloc programs

The inevitable "Hello World" case is implemented in Morloc like so:

module hw (hello)

--' A Morlock's hello world

hello = "Hello up there"The module named hw exports the term hello which is assigned to a literal

string value. The --' syntax adds a docstring that describes the term.

Paste this code into a file (e.g. "hello.loc") and then it can be imported by other Morloc modules or directly compiled into an executable program.

$ morloc make hello.locThis command will produce an executable named after the module (in this case,

hw) and pool files for each language used (e.g., pool.py, pool-cpp.out,

pool.R) in the pools/ directory. The executable is the command line

interface (CLI) to the concrete commands exported from the module.

Calling the executable with the -h flag, will print a help message:

$ ./hw -h

Usage: ./hw ARG

A Morlock's hello world

Nexus options (long form only; short forms reserved for the program):

--help Print this help message

--print Pretty-print output for human consumption

--output-file Print to this file instead of STDOUT

--output-form Output format [json|mpk|voidstar]

--keep-null Print top-level () or None as 'null' (default: empty)

Daemon mode:

--daemon Run as a long-lived daemon

--http-port PORT Listen on HTTP port

--port PORT Listen on TCP port

--socket PATH Listen on UNIX socket

Return: StrWe can ignore the nexus and daemon options for now. This usage message is

automatically generated. For each exported term, it specifies the input (none,

in this case) and output types as inferred by the compiler. For this case, the

exported command is just the term hello, so no input types are listed and the

return type is a string.

Because hw exports exactly one function, the COMMAND name is optional: you

can either write it explicitly or omit it.

$ ./hw hello

"Hello up there"

$ ./hw # equivalent: the only exported command is the implicit one

"Hello up there"3.4. Unit conversion example

To introduce Morloc programming, let’s develop a simple unit conversion program.

Let’s define a few unit conversions in C++ and write it to the file units.hpp:

#pragma once

double cels2fahr(double cels){

return 1.8 * cels + 32.0;

}

double meters2feet(double meters){

return meters * 3.28084;

}Now let’s source the C++ code in the Morloc file units.loc:

module units (cels2fahr, meters2feet)

source Cpp from "units.hpp" ("cels2fahr", "meters2feet")

type Cpp => Real = "double"

--' Convert from Celsius to Fahrenheit

cels2fahr :: Real -> Real

--' Convert from meters to feet

meters2feet :: Real -> RealHere we define a new module and its exports. The source statement reads

foreign language code and specifies the terms that should be imported from the

source code. The type phrase maps the general Morloc type Real to the

concrete C++ type double. The remaining lines define the type signatures for

the two unit conversion functions. Both map a Real input to a Real output.

We can compile and run it as below:

$ morloc make units.loc

$ ./units cels2fahr 100

212The Morloc compiler will build a C++ program from the Morloc script. The compiler also generates help statements:

$ ./units -h

Usage: ./units [OPTION...] COMMAND [ARG...]

Nexus options (must precede COMMAND):

-h, --help Print this help message

-p, --print Pretty-print output for human consumption

-o, --output-file Print to this file instead of STDOUT

-f, --output-format Output format [json|mpk|voidstar]

--keep-null Print top-level () or None as 'null'

(default: produce empty output)

Daemon mode:

--daemon Run as a long-lived daemon

--http-port PORT Listen on HTTP port

--port PORT Listen on TCP port

--socket PATH Listen on Unix socket

Commands (call with -h/--help for more info):

cels2fahr Convert from Celsius to Fahrenheit

meters2feet Convert from meters to feetThe nexus and deamon mode options are present in all compiled Morloc programs and offer options for output format and run mode. These advanced options will be discussed later.

3.4.1. Defining language-agnostic functions

This C++ definition for unit conversions is fine, but it would be helpful to be able to do conversions natively in any language without needing to define new helpers or make foreign calls to the C++ functions. In Morloc we can write code that is language independent, like so:

module units (cels2fahr, meters2feet)

import root

--' Convert from Celsius to Fahrenheit

cels2fahr cels = 1.8 * cels + 32.0

--' Convert from meters to feet

meters2feet meters = meters * 3.28084If you get the error that the root is not installed, you can install all the

Morloc standard library modules with:

$ morloc install stdlibThe root module is a language independent Morloc module that defines the

typeclasses for arithmetic among other things. Since our new module is fully

language-agnostic, we cannot directly compile it, but we can typecheck it:

$ morloc typecheck units2.loc

cels2fahr :: Real -> Real

meters2feet :: Real -> RealNow to actually compile this program into something we can execute, we need to

import a module that contains sourced implementations. Let’s define a main.loc

module that imports the types module.

module main (cels2fahr, meters2feet)

import .units

import root-cppThe .units specifies the relative path to a local Morloc module. The module,

root-cpp, stores C++ implementations of root terms, here we just need the

arithmetic operator definitions.

If we instead wanted to build in Python, we could substitute the root-cpp

import for root-py. Alternatively, we could import both root-cpp and

root-py and let the compiler decide which implementations to use.

3.5. Polyglot programs

Morloc can freely mix languages. Suppose we have a function in Python for writing reports:

# format.py

def report(ctemp, c2f):

return f"The current temperature is {ctemp}°C ({c2f(ctemp)}°F)"This function takes a temperature in Celsius and a Celsius-to-Fahrenheit converting function as arguments. It returns a string describing the temperature.

Now let’s source this function and our old C++ function into a Morloc program.

module main (report)

import root-py

import root-cpp

source Py from "format.py" ("report" as report_wrapper)

report_wrapper :: Real -> (Real -> Real) -> Str

source Cpp from "units.hpp" ("cels2fahr")

cels2fahr :: Real -> Real

--' Write a cute string about the temperature

report t = report_wrapper t cels2fahrThe report function passes a C++ function to a Python function. All the

wiring for this is done under the hood by the Morloc compiler.

We could also import the language-agnostic morloc definitions from before and

import root-py. Then the language-agnostic definitions would collapse to

native python and the report function would be pure Python.

3.6. Parallelism example

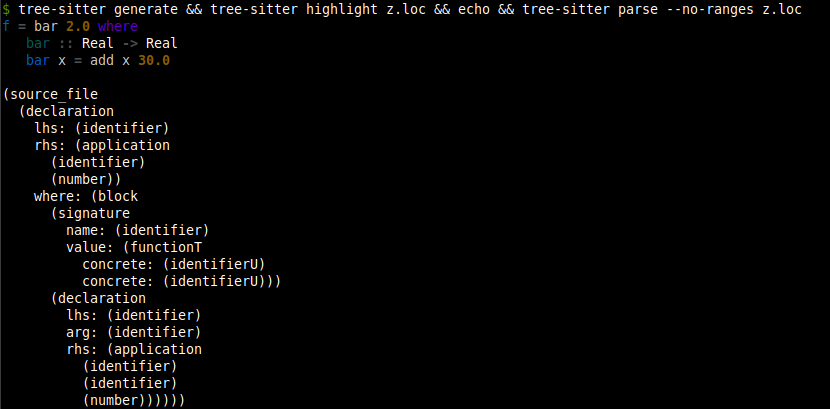

Here is an example showing a parallel map function written in Python that calls cpp functions.

module m (sumOfSums)

import root-py

import root-cpp

source Py from "foo.py" ("pmap")

source Cpp from "foo.hpp" ("sum")

pmap :: (a -> b) -> [a] -> [b]

sum :: [Real] -> Real

sumOfSums = sum . pmap sumThis Morloc script exports a function that sums a list of lists of real numbers. Here we use the dot operator for function composition. The type signature for pmap uses lowercase type variables (a and b) to indicate that the function is generic — it works for any types a and b. The sum function is implemented in cpp:

// cpp header sourced by morloc script

#pragma once

#include <vector>

double sum(const std::vector<double>& vec) {

double sum = 0.0;

for (double value : vec) {

sum += value;

}

return sum;

}The parallel pmap function is written in Python:

# Python3 file sourced by morloc script

import multiprocessing as mp

def pmap(f, xs):

with mp.Pool() as pool:

results = pool.map(f, xs)

return resultsThe inner summation jobs will be run in parallel. The pmap function has the same signature as the non-parallel map function, so can serve as a drop-in replacement.

This can be compiled and run with the lists being provided in JSON format:

$ morloc make main.loc

$ ./m sumOfSums '[[1,2],[3,4,5]]'

154. Syntax and Features

4.1. Functions

Functions are defined with arguments separated by whitespace:

foo x y z = g x (f y z)Here foo is the Morloc function that takes the arguments x, y, and

z. Using whitespace to separate arguments may be unfamiliar if you have a

background in the Algol family of languages (such as C and Python).

The Morloc internal module, which is imported into a stdlib modules, defines

the composition (.) and application ($) operators.

The . operator composes two functions. Consider the two definitions below.

foo1 x = g (f x)

foo2 = g . fThe first shows an explict function call where the function g takes the output

of f x as input. The second represents the same operation as a composition of

the two functions g and f.

Composition chains can build multi-stage pipelines:

process = format . transform . validate . parseThe $ operator is the application operator. It has the lowest precedence, so

it can be used to avoid parentheses:

foo1 x = h (g (f x))

foo2 x = h $ g $ f xMorloc supports partial application of arguments. If you take a function that

requires N arguments, and provide it one argument, you will get a new function

of (N-1) arguments. Let’s take the slice function which takes three arguments:

a start index, an end index, and a list of values. It returns a sublist. Here

are a few examples of partial application:

# create a new "take" function

take = slice 0

# define a new "head" function that returns the first element of a list

head = slice 0 1

# extract the first 5 elements from ever list in a list of lists

firstFive xss = map (slice 0 5) xssPartial application works with binary operators as well, the example below

divides every element in a list of Real values by 2. Numeric literals are

not polymorphic across Int and Real, so write 2.0 to keep the operator

on Real, and give the binding a signature so map’s `Functor instance

can be resolved:

divideByTwo :: [Real] -> [Real]

divideByTwo = map (/ 2.0)Binary operators can be applied in the reverse order as well:

divideTwoBy :: [Real] -> [Real]

divideTwoBy = map (2.0 /)The / operator is defined only on Real and other Numeric types. For

integer division, use // instead:

halvedInts :: [Int] -> [Int]

halvedInts = map (// 2)4.1.1. Lambdas

Anonymous functions are written with a backslash, one or more parameters, and

→. They capture free variables from the enclosing scope, so a lambda can

refer to bindings defined outside it:

addBias :: Real -> [Real] -> [Real]

addBias bias = map (\x -> x + bias)Here bias is captured from the outer parameter list. Lambdas must take at

least one argument: the zero-argument form \ → 5 is a parse error. To wrap

a value as a "function with no arguments", use the effect system (see the

section on effects and delayed evaluation) rather than a lambda.

4.2. Foreign functions

In Morloc, you can import functions from many languages and compose them under a common type system. The syntax for importing functions from source files is as follows:

source Cpp from "foo.hpp" ("map", "sum", "snd")

source Py from "foo.py" ("morloc_map" as map, "morloc_sum" as sum, "snd")The C++ file, foo.hpp, may be implemented as a simple header file with generic

implementations of the three required functions.

#pragma once

#include <vector>

#include <tuple>

// map :: (a -> b) -> [a] -> [b]

template <typename F, typename A>

auto map(F f, const std::vector<A>& xs) {

std::vector<decltype(f(xs.front()))> result;

result.reserve(xs.size());

for (const auto& x : xs) {

result.push_back(f(x));

}

return result;

}

// snd :: (a, b) -> b

template <typename A, typename B>

B snd(const std::tuple<A, B>& p) {

return std::get<1>(p);

}

// sum :: [a] -> a

template <typename A>

A sum(const std::vector<A>& xs) {

A total = A{0};

for (const auto& x : xs) {

total += x;

}

return total;

}Note that these implementations are completely independent of Morloc — they have no special constraints, they operate on perfectly normal native data structures, and their usage is not limited to the Morloc ecosystem.

The Morloc compiler is responsible for mapping data between the languages. But to do this, Morloc needs a little information about the function types. This is provided by the general type signatures, like so:

map :: (a -> b) -> [a] -> [b]

snd :: (a, b) -> b

sum :: [Real] -> RealThe syntax for these type signatures is inspired by Haskell. Square brackets

represent homogenous lists and parenthesized, comma-separated values represent

tuples, and arrows represent functions. In the map type, (a → b) is a

function from generic value a to generic value b; [a] is the input list

of initial values; [b] is the output list of transformed values. In the snd

type, the second element from a tuple of two generic terms is extracted. In

sum, a list of reals is converted to a single real.

Removing the syntactic sugar for lists and tuples, the signatures may be written as:

map :: (a -> b) -> List a -> List b

snd :: Tuple2 a b -> b

sum :: List Real -> RealThese signatures provide the general types of the functions. But one general type may map to multiple native, language-specific types. So we need to provide an explicit mapping from general to native types.

type Cpp => List a = "std::vector<$1>" a

type Cpp => Tuple2 a b = "std::tuple<$1,$2>" a b

type Cpp => Real = "double"

type Py => List a = "list" a

type Py => Tuple2 a b = "tuple" a b

type Py => Real = "float"These type functions guide the synthesis of native types from general

types. Take the C++ mapping for List a as an example. The basic C++ list type

is vector from the standard template library. After the Morloc typechecker

has solved for the type of the generic parameter a, and recursively converted

it to C++, its type will be substituted for $1. So if a is inferred to be

a Real, it will map to the C++ double, and then be substituted into the list

type yielding std::vector<double>. This type will be used in the generated C++

code.

These type mappings will normally be imported from foundational modules, such as

root-py or root-cpp, so you will not often need to define them in practice.

4.2.1. Importing builtin functions

Importing builtin functions can be problematic. This is why we sourced the map

and sum functions from Python under the Python names morloc_map and

morloc_sum.

If we directly sourced the builtins, as below:

source Py from "foo.py" ("morloc_map" as map, "morloc_sum" as sum, "snd")The functions map and sum would be treated by the code Morloc generates as

functions that are exported from the foo module. The generated Python code

will access these functions under the foo namespace as foo.map and

foo.sum. But map and sum are Python builtins, not direct exports, so they

must be re-exported at the top of the source file:

# foo.py

from builtins import map, sum # make builtins module-level attributes

def snd(pair):

return pair[1]For third-party modules, any term that is passed to Morloc will need to be

locally defined (def bar(…)) or specifically imported (from somemodule import bar).

4.3. Booleans

Booleans in Morloc are represented as True or False under the Bool

type. Comparison and logical operators can be imported from the root modules.

4.3.2. Comparison operators

The Eq and Ord typeclasses in root provide the standard comparison

operators. They work over any type with the appropriate instance — integers, reals, strings, and tuples and lists of comparable values.

| Operator | Meaning |

|---|---|

|

equal |

|

not equal |

|

less than |

|

less than or equal |

|

greater than |

|

greater than or equal |

import root-py

isPositive :: Int -> Bool

isPositive x = x > 0

sameLength :: [a] -> [b] -> Bool

sameLength xs ys = length xs == length ys4.3.3. Logical operators

The root module defines logical conjunction (&&), disjunction (||),

negation (not), exclusive-or (xor), and not-and (nand):

| Operator | Meaning |

|---|---|

|

logical AND |

|

logical OR |

|

logical negation (prefix function) |

|

exclusive OR |

|

NOT AND |

&& and || are short-circuiting and right-associative. && binds tighter

than ||, matching the convention of most languages:

inRange :: Int -> Int -> Int -> Bool

inRange lo hi x = lo <= x && x <= hi

isWeekend :: Int -> Bool

isWeekend day = day == 0 || day == 6

isWeekday :: Int -> Bool

isWeekday day = not (isWeekend day)4.3.4. Boolean-valued list functions

The root module provides several functions in the Foldable family that

return Bool:

| Function | Signature |

|---|---|

|

|

|

|

|

|

any returns True if the predicate holds for at least one element. all

returns True only if the predicate holds for every element. elem checks

membership using ==.

hasNegative :: [Int] -> Bool

hasNegative = any (< 0)

allPositive :: [Int] -> Bool

allPositive = all (> 0)

containsZero :: [Int] -> Bool

containsZero = elem 04.3.5. Guards

Booleans drive Morloc’s guard syntax. A guard alternative starts with ? and

selects the first branch whose condition evaluates to True; the : line is

the fallthrough:

classify :: Int -> Str

classify x

? x < 0 = "negative"

? x == 0 = "zero"

: "positive"See the Guards section for a full description of guard syntax.

4.4. Integer types

Integers may be written in decimal, hexadecimal, octal, or binary:

-- standard decimal notation

42

-- hexadecimal notation (case insensitive)

0xf00d

0xDEADBEEF

-- octal notation (upper or lowercase 'o')

0o755

-- binary notation (upper or lowercase 'b')

0b0101A prefixed integer must contain only digits valid for its base, and must end on a non-identifier character. A trailing letter or digit that is not a valid digit for the base is a compile-time error rather than a silently truncated literal followed by an unrelated identifier:

$ morloc eval -e "0xF00D"

61453

$ morloc eval -e "0xF0OD"

<expr>:1:1: malformed hexadecimal literal: 0xF0OD

$ morloc eval -e "0b1001"

9Here I am using the Morloc eval command to evaluate a single Morloc

expression.

Morloc provides a default variable-width integer for general use and fixed-width types for performance-critical code.

4.4.1. Integer types at a glance

| Type | Width | Use case |

|---|---|---|

|

Variable (arbitrary precision) |

Default integer for most code. Works across all languages. |

|

8, 16, 32, 64 bits (signed) |

Performance-critical code with known bounds. |

|

8, 16, 32, 64 bits (unsigned) |

Bit manipulation, byte data, indices. |

4.4.2. The default Int type

The Int type is Morloc’s universal integer. The on-wire representation is

variable-width: values up to 64 bits fit in 16 bytes inline, and larger values

spill to a pointer to an array of 64-bit limbs. The in-language range of

Int, however, is determined by the host language’s native integer type:

| Language | Native binding for Int |

Representable range |

|---|---|---|

Python |

|

Arbitrary precision |

C++ |

|

32-bit signed ( |

R |

|

32-bit signed |

A morloc program that needs a value above 32 bits in C or R should declare

the field as `Int64` (or `UInt64`), which maps to `int64_t` in C and to R’s

numeric (with 53-bit integer precision via double).

Integer literals are Int by default:

x = 42 -- Int

y = 0xDEADBEEF -- Int (hex literal)

z = -9999 -- Int4.4.3. Big integers from Python

Python natively supports arbitrary-precision integers. Morloc’s Int type

takes full advantage of this. For example, computing large factorials:

module main (fact)

import root-py

fact :: Int -> Int

fact n

? n == 0 = 1

: n * fact (n - 1)$ morloc make -o calc main.loc

$ ./calc fact 100

93326215443944152681699238856266700490715968264381621468592963895217599993229915608941463976156518286253697920827223758251185210916864000000000000000000000000This produces a 525-bit integer — far beyond what any fixed-width type can hold. The value is stored as a multi-limb big integer and printed correctly.

4.4.4. Cross-language overflow errors

When a big integer is passed to a language whose concrete type cannot represent it, Morloc produces a clear error at the language boundary. The error states the value’s size, the target type’s bit width, and its representable range.

For example, passing a large factorial from Python to C++:

module main (factCpp)

import root-py

import root-cpp

fact :: Int -> Int

fact n

? n == 0 = 1

: n * fact (n - 1)

factPy :: Int -> Int

factPy n = idpy (fact n)

factCpp :: Int -> Int

factCpp x = idcpp (factPy x)Small values pass through without issue:

$ ./calc factCpp 5

120But large values produce a descriptive error:

$ ./calc factCpp 100

Error: run failed

Integer overflow: 9-limb integer (576 bits) does not fit in

32-bit type (range -2147483648 to 2147483647)The same applies to R, which is limited to 32-bit integers and 53-bit integer precision via doubles:

$ ./calc factR 100

Error: run failed

Integer overflow: 9-limb integer (576 bits) does not fit in

R's numeric type (max 2^53 for integer precision).

Use a fixed-width type (Int32, Int64) or keep computation in Python.Note the use of idpy in the example above. This forces the factorial

computation to run entirely in Python, where int is arbitrary

precision. Without this, the compiler will collapse the implementation of fact

to pure C++, which would be much faster, but would not show the cross-language

behavior.

4.4.5. Compile-time literal bounds

Integer literals are bounds-checked at compile time against the type they’re written into. A literal that overflows its target type is rejected with a sourced error pointing at the literal:

tooLarge :: UInt8

tooLarge = 1000$ morloc make main.loc

main.loc:2:12: error:

Integer literal 1000 overflows UInt8 (range 0 to 255)

|

2 | tooLarge = 1000

| ^The error caret points at the literal itself, not the binding name — so when the same literal is referenced from multiple sites, the diagnostic stays on the offending source.

4.4.6. Fixed-width integer types

For code where values are known to be bounded, use fixed-width types. These map directly to the target language’s native types:

| Morloc type | C++ | Python | R |

|---|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Fixed-width types use direct binary serialization with no overhead — the serialized format is identical to the in-memory representation. This is the right choice for numerical code and interop with C libraries that require specific widths.

|

|

You might wonder why the Python types are all int rather than a truly

fixed-size integer such as the numpy alternatives. There is a way to

specialize types in this way that we will learn later in the "Type Hierarchies"

section. Also see the later sections on Tensors and Tables where we discuss

higher performance types and shared memory.

|

4.4.7. Negation and unary minus

Morloc supports unary minus (-) on any numeric type. The same glyph plays

two roles — the binary subtraction operator and the unary negation operator — and the rule for distinguishing them is whitespace-sensitive.

-- prefix `-` on a value: produces the additive inverse

neg :: Int -> Int

neg x = -x

-- prefix `-` on an expression: parenthesize the expression

shifted :: Int -> Int

shifted x = -(x + 1)

-- works on any numeric primitive (Int, Int8..64, UInt8..64,

-- Real, Float32, Float64) via the `Negatable` typeclass

flipReal :: Real -> Real

flipReal x = -xNegative literals

A - directly preceding a digit — with no space between the dash and the

digit — is parsed as part of the numeric literal itself. This means -1 is

an atomic integer (not a function call), and works in contexts where function

calls are not allowed (such as pure-data files):

xs :: [Int]

xs = [-1, -2, -3, -100]

ys :: [Real]

ys = [-1.5, -2.0e-3, -0xff]

point :: (Int, Int)

point = (-3, -4)The same atomic-lexing rule extends to the non-finite Real literals -Inf

and -NaN; see the floats chapter for details.

When - is unary vs. binary

The lexer applies an asymmetric-whitespace rule. A - immediately followed

by a digit is treated as part of a negative literal whenever the dash is in

a position that cannot end an expression on its left:

-

at the start of input;

-

after an opening delimiter (

(,[,,,=, etc.); -

after another operator;

-

after whitespace (the dash is preceded by space, but the digit is not).

In every other position — where the dash directly follows an operand-finishing token with no intervening whitespace — the dash is the binary subtraction operator.

| Expression | Interpretation |

|---|---|

|

atomic literal |

|

|

|

binary subtraction |

|

binary subtraction |

|

list of two negative literals |

|

|

|

desugars to |

|

desugars to |

Position restrictions

Prefix - on a non-literal expression is permitted wherever an expression

begins, including the right-hand side of an infix operator. The only

restriction is that the operand of prefix - must start with an atom

(an identifier, a literal, an open paren, an open bracket, or a similar

atom-introducing token) — not with another prefix -. Stack two negations

by parenthesizing the inner one.

-- ok: -x at the start of an expression

neg1 :: Int -> Int

neg1 x = -x

-- ok: -x on the right of a binary operator

neg2 :: Int -> Int

neg2 x = 1 + -x

-- ok: subtracting a negated value

neg3 :: Int -> Int -> Int

neg3 x y = x - -y

-- ok: -x parenthesized; equivalent to neg2

neg4 :: Int -> Int

neg4 x = 1 + (-x)

-- syntax error: two adjacent prefix dashes are not allowed

-- bad :: Int -> Int

-- bad x = - -x

-- ok: parenthesize the inner negation to stack two

double :: Int -> Int

double x = -(-x)The Negatable typeclass

Negation is provided by a typeclass Negatable a defined in the internal

module:

class Negatable a where

negate :: a -> aEach numeric primitive has a Negatable instance in root-py, root-cpp,

and root-r that dispatches to the host language’s native unary minus. The

expression -x is desugared by the parser to negate x, so writing negate x

explicitly is equivalent. The compiler chooses the language for each negation

the same way it chooses the language for any polymorphic call: based on the

imported language modules and the surrounding cross-language boundaries.

4.5. Floating-point types

Morloc’s floating-point types are IEEE 754 binary formats. A Real value is

the default; Float32 and Float64 are explicit precision controls.

| Type | Width | Use case |

|---|---|---|

|

Language-dependent (typically 64-bit IEEE 754) |

Default floating point. |

|

32 bits (IEEE 754 binary32) |

Tensors, GPU code, memory-constrained numerics. |

|

64 bits (IEEE 754 binary64) |

Default-precision scientific computation. |

Each type maps to its host-language equivalent:

| Morloc type | C++ | Python | R |

|---|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

4.5.1. Literal forms

Real literals are written with a decimal point or scientific notation:

pi :: Real

pi = 3.14159265358979

-- scientific notation (upper or lowercase 'e')

avogadro :: Float64

avogadro = 6.022e23 -- prints as 6.022e+23 (explicit + on the exponent)

-- negative exponent

boltzmann :: Real

boltzmann = 1.380649e-23

-- 32-bit float for reduced memory in tensors

weights :: Tensor1 1000 Float32A literal without a decimal point and without an exponent is parsed as an

Int, not a Real. Use 1.0 or 1e0 if you want a floating-point literal

of value 1.

4.5.2. IEEE 754 and non-finite values

Morloc’s Real type reflects IEEE 754 in full: the value space includes the

finite reals representable in the target precision plus three classes of

non-finite values that are reserved bit patterns in the standard:

-

+Infinity(positive infinity) -

-Infinity(negative infinity) -

NaN(Not-a-Number)

These are produced by ordinary IEEE 754 arithmetic — e.g. dividing by zero,

overflowing the finite range, or evaluating an indeterminate form like

Inf - Inf or 0 * Inf. They are not error states; they are values that

propagate through subsequent computation according to spec-mandated rules.

Source-level literals

The non-finite values have dedicated source-level literals, capitalized to

match Morloc’s existing keyword conventions (True, False, Null):

posInf :: Real

posInf = Inf

negInf :: Real

negInf = -Inf

notANumber :: Real

notANumber = NaN-Inf is lexed as a single atomic token (mirroring how -1.5 is one token

rather than negate(1.5)), so it works in pure-morloc contexts where

negate is not in scope. The same applies to -NaN, though the sign of

NaN is collapsed at the wire boundary — both NaN and -NaN round-trip

as the canonical nan.

All three target languages (Python, R, C++) follow IEEE 754 for arithmetic on non-finite values, so the results below are identical regardless of which language a computation runs in:

| Expression | Result | Why |

|---|---|---|

|

|

Same-sign infinity addition |

|

|

Invalid op: opposite-sign cancellation |

|

|

Invalid op: same-sign cancellation |

|

|

Invalid op: zero times infinity |

|

|

Magnitude preservation |

|

|

Sign rule on multiplication |

|

|

Like-sign product |

|

|

Mixed-sign product |

|

|

NaN absorption (additive) |

|

|

NaN beats zero |

|

|

NaN beats infinity |

|

|

Sign-bit flip |

|

|

Sign flip stays NaN |

The behavior is mandated by IEEE 754 and is uniform across pools.

4.5.3. Compile-time literal overflow

Real literals are bounds-checked at compile time against the type they’re written into. A literal whose magnitude exceeds the target precision’s representable range is rejected with a sourced error pointing at the literal.

For Real and Float64 (max ≈ 1.8e308):

tooBig :: Real

tooBig = 1e500$ morloc make main.loc

main.loc:2:10: error:

Float literal 1.0e500 overflows Float64 (|x| > 1.8e308)

|

2 | tooBig = 1e500

| ^The check is per-target-precision, so a literal that fits Float64 but

overflows Float32 (max ≈ 3.4e38) is rejected when typed as Float32:

tooBigF32 :: Float32

tooBigF32 = 1e100$ morloc make main.loc

main.loc:2:13: error:

Float literal 1.0e100 overflows Float32 (|x| > 3.4e38)

|

2 | tooBigF32 = 1e100

| ^Negative-magnitude literals are checked symmetrically:

main.loc:2:10: error:

Float literal -1.0e500 overflows Float64 (|x| > 1.8e308)

|

2 | tooNeg = -1e500

| ^The non-finite literal forms Inf, -Inf, and NaN bypass the bounds

check by construction — they are explicit non-finite values, not finite

literals that happened to overflow.

4.5.4. Wire format and JSON interop

The JSON wire format is RFC 8259-compliant: standard JSON has no syntax for non-finite numeric literals, and the spec’s recommended workaround is strings. Morloc emits non-finite Real values as quoted lowercase strings:

| Value | JSON form |

|---|---|

|

|

|

|

|

|

Finite |

The numeric form ( |

This means a Real-typed field can appear either as a JSON number or as a JSON string in output. Consumers should accept both shapes.

The JSON decoder is liberal: it accepts every common spelling

case-insensitively, with optional sign, plus the bareword null decoded as

NaN for backward compatibility with payloads written by older Morloc

runtimes:

| Accepted on input | Decoded as |

|---|---|

|

|

|

|

|

|

|

|

For example, calling a pure-morloc identity from the command line:

$ ./main realId 3.14

3.14

$ ./main realId '"inf"'

"inf"

$ ./main realId '"-Infinity"'

"-inf"

$ ./main realId '"NaN"'

"nan"Internal cross-language boundaries (Morloc-to-pool calls) use a binary format that preserves IEEE 754 bytes verbatim, so non-finite values round-trip without information loss. Only the JSON boundary — typically the program’s final output — uses the string form.

|

|

Cross-language gotcha: division by zero in Python

All three target languages implement IEEE 754 arithmetic identically for

the non-finite cases listed above. There is one notable divergence in

language design, however: Python deliberately raises a

If a Morloc program relies on |

4.5.5. Float32 precision considerations

Float32 halves memory usage relative to Float64 and is the right choice

for large numerical arrays (tensors, image buffers, GPU input) where the

extra precision is not needed. The tradeoffs to keep in mind:

-

Significand has ~7 decimal digits of precision (vs. ~15-17 for

Float64).- A literal like `0.1

-

Float32` rounds to the nearest representable

binary32value — it is not exact.

-

Maximum magnitude is ≈ 3.4e38 (vs. 1.8e308 for

Float64). Compile-time bounds-checking enforces this for literals. -

All arithmetic on

Float32runs at single precision, including the overflow-to-infinity threshold.

For most application code, Real (mapped to double / numeric /

host-language float) is the right default. Reach for Float32 deliberately

when memory or interop with single-precision hardware demands it.

4.5.6. Negation of Real values

Negation works on Real, Float32, and Float64 the same way it does on

integers, via the Negatable typeclass. See the integers chapter for the

full unary-minus rules. The IEEE 754-relevant differences for Real:

-

-Infand-NaNare atomic source literals — nonegatelookup is performed, so they work in pure-morloc contexts. -

negateof+Infis-Inf;negateofNaNisNaN(sign bit flipped, but the value is still NaN by IEEE 754 rules). -

negateof+0.0is-0.0. The two values compare equal under==but have different IEEE 754 bit patterns; the binary cross-language format preserves the distinction, the JSON output does not.

4.6. Strings

Morloc strings are double-quoted. They support Unicode unicode characters:

cn = "你知道得太多了🤫"String interpolation uses the #{…} syntax. The expression inside the

braces must have type Str — non-Str values are not auto-converted. To

embed an Int, Real, Bool, or other non-Str value, call show (or

another explicit stringifier) inside the braces:

helloYou you = "hello #{you}"

sayCount n = "count: #{show n}"Inside a string, the backslash introduces an escape sequence. The following escapes are recognized:

| Escape | Meaning |

|---|---|

|

newline |

|

tab |

|

carriage return |

|

NUL byte (U+0000) |

|

a single backslash |

|

a literal double quote |

Any other backslashed character (for example, \q) is a compile-time error. A

literal backslash must always be written as \\. Windows-style paths, for

example, must be written like so:

winPath = "C:\\Users\\weena\\file.txt"Writing "C:\Users" instead would fail to compile, since \U is not a

recognized escape.

Morloc also recognizes tripple quotes. For one-line strings, the tripple quotes can help avoid the need to escape internal quotations. For example:

dblStr = """That's weird, I also spelled it "ear quotes", like "bunny ears"."""

sinStr = '''"Why do the pigeons here have so few toes?"'''These will yield strings that are identical to single quoted strings with the quotes escaped. The most valuable use of triple quotes, though, is for multi-line strings.

The spacing of multi-line strings is trimmed by applying the following 3 rules in order: 1. Initial spaces up to and including the first newline are removed 2. Terminal spaces up to and including the final newline are removed 3. All leading spaces are trimmed by the number of leading spaces in the line with the fewest leading spaces

This allows you to write blocks of text with natural indentation. Below is a multi-line string:

longString =

"""

this is a long

string

"""It evaluates to:

this is a long string

This allows natural paragraphs to be written without breaking indentation patterns.

4.6.1. Null strings

A particularly thorny issue in multi-lingual string support involves NUL

character. In C strings, NUL characters (0 in ascii) terminate strings and thus

they are generally illegal. Common C functions like strlen and strdup would

fail if any NUL exists in a string. R, which is built on C, makes within-string

NUL characters strictly illegal. On the other hand, Python and C++ (through

the standard template string type) support NUL in strings. While these

languages support NUL’s, problems can still arise in the common case where these

string objects are converted to C-strings — e.g., through direct access in

C++ with the .c_str() method or through C ABI in Python.

NUL’s are not common in strings. The main use case is to store binary data. This

is not the recommended use of the Str type, though. It would generally be

better to use [Uint8] to store bytes (or even better, Vector n Uint8, as

will be introduced later). However, the Morloc philosophy is to support what is

idiomatic in a language. The Str type is meant to represent the default string

type in all languages (like the Int type). So the Morloc Int fully suports

NUL’s in strings. They may be present in string literals through the \0

character, the Morloc evaluator preserves NULs end-to-end, and JSON represents

them with the standard \u0000 escape.

All languages have an allow_string_null in their lang.yaml spec that

specifies whether NUL’s are allowed. When a Str vale with a NUL character is

sent to a NUL-intolerant language, the Morloc runtime rejects the call with a

clear error message:

Error: r does not support embedded NUL bytes in strings

(at args[0] (byte 3 of 7))

A Str literal that is destined to live in source of a NUL-intolerant

language (typically an R-sourced function whose argument is a literal NUL

string) is rejected at compile time, since the pool would otherwise fail to

parse the generated source. The error names the offending pool language.

Checking all strings for NUL characters, however, is expensive. You can opt out when safe in two ways:

-

morloc make --unsafe-skip-null-checkbakes a per-program skip flag into the manifest. -

The runtime env var

MORLOC_SKIP_NULL_CHECK=1skips the scan dynamically.

Both are unsafe: a NUL passing into R will still crash inside the R runtime, just with R’s own error rather than the morloc-level diagnostic. There is no way for user-written R code to do anything useful with a NUL string.

4.7. Tuples and Lists

Two of the most common container types are tuples and lists. Tuples have a fixed size and may contain elements with different types. Lists have variable size but contain elements all of the same type.

Tuples and lists both are translated into JSON as arrays. So from the JSON alone

it is impossible to tell if a value such as [1,2,3] is a list of integers or a

tuple of three integers.

4.7.1. Tuples

Tuples may be used to store a fixed number of terms of different type.

x :: (Int, Bool, Real)

x = (1, True, 6.45)Tuple types and tuple values are both represented as comma-delimited values

within parentheses. The parenthesized type representation is syntactic sugar for

a fixed-size tuple type such as Tuple3 or Tuple8; the parser generates the

appropriate TupleN form from the number of fields, so there is no fixed upper

bound on tuple arity. Generally, if you have more than a few members in a

tuple, it is better to define a record type with named values.

4.7.2. Lists

Lists are homogeneous, variable-length sequences of values. The base list type

is List a, which can be written as [a]:

x :: [Int]

x = [1, 2, 3]

ys :: List Real

ys = [1.0, 2.0, 3.0]The default List type maps to each language’s natural ordered container

(list in Python, std::vector in C++, list/vector in R).

While all list types share the same representation on the wire — zero or more elements in contiguous memory — there are several data structures that for accessing this data that have different performance tradeoffs. Deques can efficiently add elements to the beginning or end of the list. For an introduction in how to specialize types, see the section on type hierarchies.

A more rigorous and high-performance alternative to the List type is the

Vector type, the 1D tensor, that is described in the Tensors chapter.

Tuples may be used to store a fixed number of terms of different type.

x :: (Int, Bool, Real)

x = (1, True, 6.45)Tuple types and tuple values are both represented as comma-delimited values

within parentheses. The parenthesized type representation is syntactic sugar for

a fixed-size tuple type such as Tuple3 or Tuple8; the parser generates the

appropriate TupleN form from the number of fields, so there is no fixed upper

bound on tuple arity. Generally, if you have more than a few members in a

tuple, it is better to define a record type with named values.

4.8. Records

A record is a named, fixed set of fields. Each field has a name and a type.

Records may map to different structures in different languages (e.g., a Python

dict, an R list, or a C++ struct); internally they are laid out

positionally, but the surface language always binds field values by name.

A general record is defined as follows:

record Person = Person

{ name :: Str

, age :: Int

}Concrete forms must have the same field names and field types. Since these must be the same, they need not be repeated in the concrete definitions. We only need to specify the outer name of container:

record Py => Person = "dict"

record R => Person = "list"

record Cpp => Person = "person_t"In Python and R, records are typically dict and list types,

respectively. These types can contain any fields of any type. In C++, records

are represented as structs; these must be defined in the C++ code, as shown

below.

struct person_t {

std::string name;

int age;

};Functions may be defined that act on the records, as below:

import root-r

import root-py

import root-cpp

source R from "foo.R" ("incAge" as rinc)

source Py from "foo.py" ("incAge" as pinc)

source Cpp from "foo.hpp" ("incAge" as cinc)

-- Increment the person's age

rinc :: Person -> Person

pinc :: Person -> Person

cinc :: Person -> Person4.8.1. Record literals match by field name

Field values in a record literal are bound to declared fields by name, not by position. The order in which fields appear in the literal is irrelevant. The two literals below denote the same value:

record Person = Person { name :: Str, age :: Int }

alice :: Person

alice = { name = "Alice", age = 30 }

alice2 :: Person

alice2 = { age = 30, name = "Alice" } -- same value as `alice`A literal must mention every declared field exactly once. Missing a declared field, naming a field that does not exist on the record, or repeating a field name is a compile-time error.

bad1 :: Person

bad1 = { name = "Alice" } -- error: missing field 'age'

bad2 :: Person

bad2 = { name = "Alice", age = 30, weight = 65 } -- error: unknown field 'weight'

bad3 :: Person

bad3 = { name = "Alice", name = "Bob", age = 30 } -- error: duplicate field 'name'4.8.2. Language-specific representation of records

The "foo.R" file contains the function:

incAge <- function(person){

person$age <- person$age + 1

person

}No special code is needed for person, it is just a builtin R list. Similarly for Python:

def incAge(person):

person["age"] += 1

return personC++ requires a definition of a person_t struct:

struct person_t {

std::string name;

int age;

};

person_t incAge(person_t person){

person.age++;

return person;

}Records may be initialized and functions called on them:

foo name age

= (rinc . pinc . cinc)

{ name = name, age = age }foo, above, initializes a Person record and then increments its age 3 time

in different languages.

|

|

Records may contain fields with arbitrarily complex types, but recursive types are not currently supported. |

4.9. Pattern functions for data access/update

Data structures may be accessed and modified using pattern functions. These are a dedicated accessor and update operators for accessing and rearranging data structures. Patterns may be getters that extract a value or a tuple of values from a data structure or they may be setters that update a data structure without changing its type.

A getter pattern describes an optionally branching path into a data structure. Each segment of the path may be a tuple index, a record key, or a group of indices/keys. The terminal positions in the pattern are returned as elements in a tuple. Here are a few examples:

-- return the 1st element in a tuple of any size

.0 (1,2) -- return 1

.0 ((1,3),2,5) -- return (1,3)

-- return the 2nd element in the first element of a tuple

.0.1 ((1,3),2,5) -- return 3

-- returns the 2nd and 1st elements in a tuple

.(.1,.0) (1,2,3) -- returns (2,1)

.(.1,.0) (1,2) -- returns (2,1)

-- indices and keys may be used together

.0.(.x, .y.1) ({x=1, y=(1,2), z=3}, 6) -- returns (1,2)These patterns are transformed into functions that may be used exactly like any other function.

map .1 [(1,2),(2,3)] -- returns [2,3]Setter patterns are similar but add an assignment statement to each pattern terminus.

.(.0 = 99) (1,2) -- return (99,2)

-- indices and keys may be used together

.0.(.x=99, .y.1=33) ({x=1, y=(1,2), z=3}, 6) -- returns ({x=99, y=(1,33), z=3}, 6)| Pattern | Python | Note |

|---|---|---|

.0 |

lambda x: x[0] |

patterns are functions |

.0 x |

x[0] |

|

.0.k x |

x[0]["k"] |

|

.(.1,.0) x |

(x[1], x[0]) |

|

foo .0 xs |

foo(lambda x: x[0], xs) |

higher order |

.(.k = 1) x |

x["k"] = 1 |

Note that setters are designed to not mutate data. The spine of the data

structure will be copied which retains links to the original data for unmodified

fields. So the expression .(.0 = 42) x when translated into Python will create

a new tuple with the first field being 42 and the remaining fields assigned to

elements of the original field. The same goes for records.

4.10. where and let clauses

Functions may use where clauses to define local bindings:

f x = y + b where

y = x + 1

b = 41Where clauses inherit the scope of their parent and may be nested:

f = x where

x = y where

y = a + b

a = 1

b = 41In a where clause, bindings can refer to the function’s arguments (from the

left-hand side) and can be used in the main expression (the right-hand

side). The bindings in a where block are order-independent and may refer to

each other freely (though must not be mutually recursive).

let is the more orderly cousin of where. There may be multiple let

assignments before the terminal in. These are guaranteed to be executed in

order and may only refer to terms bound above them.

f n =

let m = n + 1

y = m + 2

in (m + y)4.10.1. Scope rules: let shadows, where does not

The two forms differ in how they treat repeated names. let is non-recursive

sequential: each binding is in scope for everything that follows, and a later

binding can shadow an earlier one of the same name. where is

order-invariant: every binding sees every other, so a name can only be bound

once in a single clause and cannot collide with a function parameter.

This code is legal:

-- chain of single-binding lets

foo = let x = 1

let x = 2

in x -- evaluates to 2Whereas similar patterns in a where clause are rejected at compile time:

-- error: duplicate binding in where-clause: y

g n = y where

y = n + 1

y = n + 2

-- error: where-clause binding shadows function parameter: x

g x = y where

x = 100

y = x + 14.11. Conditionals

Guards provide conditional branching. Each guard clause begins with ? followed

by a condition and a result expression, with a : default that is always

required as the final case:

abs :: Int -> Int

abs x

? x >= 0 = x

: neg xGuards are evaluated lazily from top to bottom. The first condition that

evaluates to true determines the result; remaining guards are not evaluated. The

: default always terminates the guard chain, ensuring exhaustiveness.

Guards work naturally with multiple parameters:

clamp :: Int -> Int -> Int -> Int

clamp lo hi x

? x < lo = lo

? x > hi = hi

: xGuards can be combined with where clauses to define local bindings used in the

conditions and result expressions:

classify :: Int -> Str

classify x

? x > big = "big"

? x > small = "medium"

: "small"

where

big = 100

small = 10Guards may appear inside let bindings:

absLet :: Int -> Int

absLet x =

let result ? x >= 0 = x

: neg x

in resultGuards can also be used as inline expressions anywhere a value is expected. The parentheses are required only when the inline guard appears inside a larger expression (as a function argument, inside a list, on the right of an operator, etc.); at top-level assignment position they may be omitted:

classify :: Int -> Str

classify x

? x > 100 = "big"

? x > 10 = "medium"

: "small"

-- but inside a larger expression, the parentheses are needed

labelOf :: Int -> Str

labelOf x = "label: " <> (? x > 0 = "pos" : "non-pos")4.12. Recursion

4.12.1. Recursive functions

Morloc supports recursive function definitions. A function may refer to itself in its body, and the compiler will generate the appropriate code in the target language.

The classic factorial function can be written using guards and self-reference:

fact :: Int -> Int

fact n

? n == 0 = 1

: n * fact (n - 1)Functions may also be mutually recursive. The following pair of functions determines (rather inefficiently) whether a number is even or odd:

isEven :: Int -> Bool

isEven n

? n == 0 = True

: isOdd (n - 1)

isOdd :: Int -> Bool

isOdd n

? n == 0 = False

: isEven (n - 1)|

|

Recursion is not well supported across all target languages. Some languages impose recursion depth limits or lack tail-call optimization, which can cause crashes or stack overflows for deep recursion. |

4.12.2. Recursive types

A morloc type is recursive when its definition refers to itself. To

terminate, the recursion must be guarded — every cycle through the

definition must pass under an ?T (optional, with Null as the base case)

or [T] (list, with [] as the base case). Bare self-reference like

type X = X is rejected at compile time, as are cycles spanning multiple

type definitions.

The examples below assume one stdlib import for working with optional values:

import maybe-py (require, isNull)isNull tests whether an optional value is absent; require asserts it is

present and strips the ?.

Linked lists

The canonical example is a linked list: a payload paired with an optional

tail of the same type. Once a value reaches Null in the tail slot, the

chain terminates.

type LL a = (a, ?(LL a))A literal value of this type can be written directly, with Null in

the tail slot at the end of the chain:

llExample :: LL Int

llExample = (42, (7, (99, Null)))A builder generating a descending range:

llRange :: Int -> LL Int

llRange n ? n > 0 = (n, llRange (n - 1))

: (0, Null)The recursive call returns LL Int, but the second slot expects ?(LL Int);

the typechecker’s element-wise coercion (a → ?a) handles that

automatically. The base case writes Null directly into the optional slot.

A consumer that counts the chain length. The selectors .0 x and .1 x

retrieve the first and second tuple slots:

llLen :: LL Int -> Int

llLen x ? isNull (.1 x) = 1

: 1 + llLen (require (.1 x))A consumer that sums the payloads, with the same recursion shape:

llSum :: LL Int -> Int

llSum x ? isNull (.1 x) = .0 x

: (.0 x) + llSum (require (.1 x))Branching: binary trees

The ? guard can appear more than once in a body. A binary tree node

carries a payload and two independently optional children, so each node

may have zero, one, or two subtrees.

type BTree a = (a, ?(BTree a), ?(BTree a))A literal binary tree with a root and two leaves:

btreeExample :: BTree Int

btreeExample = (10, (5, Null, Null), (15, Null, Null))A balanced builder that shares its subtree value via let:

btreeBuild :: Int -> BTree Int

btreeBuild d ? d <= 0 = (1, Null, Null)

: let sub = btreeBuild (d - 1)

in (0, sub, sub)To sum every payload, factor out the optional-handling into a small helper so the recursive function reads as the structural recursion it is:

btreeSum :: BTree Int -> Int

btreeSum x = .0 x + maybeSum (.1 x) + maybeSum (.2 x)

maybeSum :: ?(BTree Int) -> Int

maybeSum m ? isNull m = 0

: btreeSum (require m)List-guarded recursion: rose trees

The other allowed guard is [T]. An empty list [] is the natural base

case, and arbitrary branching is encoded directly as a list of children

rather than a fixed number of optional slots.

type Rose a = (a, [Rose a])A literal rose tree with a root and two leaf children:

roseExample :: Rose Int

roseExample = (1, [(2, []), (3, [])])A builder that produces a complete binary rose tree of a given depth (each node has either two or zero children):

roseBuild :: Int -> Rose Int

roseBuild d ? d <= 0 = (1, [])

: let sub = roseBuild (d - 1)

in (0, [sub, sub])Summing the payloads of a rose tree uses the Foldable instance for List

(via fold from the stdlib) to combine the children’s sums:

roseSum :: Rose Int -> Int

roseSum x = .0 x + fold (\acc child -> acc + roseSum child) 0 (.1 x)Record form

The same recursion rules apply to record declarations. The only surface

difference is that fields are addressed by name rather than position; the

wire format and the typecheck rules are identical to the tuple-alias form.

record LL where

head :: Int

tail :: ?LLA literal of the record form uses {…} syntax with named fields:

llRecordExample :: LL

llRecordExample = {head = 42, tail = {head = 7, tail = Null}}The length consumer is the same shape as for the tuple-form LL, with

.tail x in place of .1 x:

llLen :: LL -> Int

llLen x ? isNull (.tail x) = 1

: 1 + llLen (require (.tail x))Parameterised recursion

Recursive types can carry type parameters. The parameter threads through every recursive position; the consumer below stays polymorphic in the payload.

record Container a where

val :: a

sub :: ?(Container a)A literal Container Int:

containerExample :: Container Int

containerExample = {val = 1, sub = {val = 2, sub = Null}}A polymorphic consumer:

containerLength :: Container a -> Int

containerLength x ? isNull (.sub x) = 1

: 1 + containerLength (require (.sub x))|

|

Mutually recursive type aliases — two or more type definitions that reference each other in a cycle — are not supported. The compiler detects these at the frontend and rejects them with a clear error, across both general and language-specific scopes. The same rule applies whether the cycle lives in one module or spans several. |

4.13. Effects and delayed evaluation

4.13.1. Why effects need a name

Morloc is a functional language. A function maps a value in one domain to a value in another, and the mapping is the function’s whole meaning. That works neatly for arithmetic, for string manipulation, for transforming records. It runs into trouble the moment we try to talk about anything that touches the world.

Consider readFile:

readFile :: Str -> StrThis looks like a function from a filename to a string. Indeed it is a function at any given instant on a given machine: the filename names a particular file, and the file has particular contents. But files change. If we read the same file twice in the same program, we may get two different answers. So it matters when we call the function and we may want to call it a several different points in time.

The same problem shows up for "values" that are not really values. What is the type of the current time? What is the type of a coin toss?

time :: ???

coinToss :: ???We could try to make them into honest functions by handing them an explicit

world or an explicit random seed — time :: TemporalState → Time, coinToss

:: RNG -> (Bool, RNG) — and thread the state through every call that needs

it. This can work, but it pulls extra plumbing into every signature.

Morloc takes a different route: it gives the effect a name at the type

level. <Rand> Bool is not a Bool; it is a suspended computation that, when

run, performs the Rand effect and yields a Bool. Where the original

problem was "this looks like a value but doesn’t act like one", the solution

is to give it a type that says so.

|

|

<E> T is a suspended computation that performs effects E and yields

a T. It is not a T. You obtain a T by running it.

|

4.13.2. The mental model

-

<E> Tis a suspension. Holding one in a variable does nothing. -

The bind arrow

<-runs a suspension once and gives you a result. -

A bare statement inside a

do-block runs a suspension and throws the result away. This is how you sequence side effects whose return values you do not need. -

letbinds without running. If the right-hand side is effectful, the suspension is what gets bound; it only fires when a later<-reaches for it. -

When you export an

<E> T, the compiled program runs it for you at the boundary. The caller receives aT.

Effect labels are names that the compiler propagates and checks for

coverage. What an effect means — what Error represents, what IO permits

at runtime, what Rand looks like operationally — is the business of the

library that defines the effect, not the compiler. The compiler’s job is to keep

the labels honest; libraries build behaviour on top.

4.13.3. Syntax

Declaring an effect

Every effect label a program uses must be declared:

effect IO

escapable effect ErrorThe default form is inescapable; the escapable form is discussed in

Escapable and inescapable effects. Declarations are global to the program; two modules cannot

declare the same label with conflicting escapability.

A <L> that has not been declared is a compile error — the compiler does

not know any effect names of its own.

Annotating signatures

An effect annotation goes immediately before the type it wraps:

readFile :: Path -> <IO> Str

rollDie :: Int -> <Rand> Int

riskyRead :: Path -> <IO, Error> StrMultiple labels are comma-separated inside a single pair of angle brackets.

Order does not matter; <IO, Error> and <Error, IO> are the same row.

The empty row <> is the identity: <> T is definitionally equal to T.

You do not write it; the point is that a pure value satisfies any effect

slot whose row contains it (see The rules).

do-blocks

A do-block strings statements together. It is the only construct in which

effects are actually run. Inside a block there are exactly three forms of

statement:

| Form | Meaning |

|---|---|

|

Run |

|

(bare) Run |

|

Bind |

The final statement of a do-block is its return value. The block’s overall

type is <U> T, where U is the union of all the statements' effects and

T is the type of the final statement.

A worked example covering every form:

sideEffect :: Int -> <IO> Int

add :: Int -> Int -> Int

example :: <IO> Int

example = do

let t = sideEffect 3 -- t :: <IO> Int, NOT run

sideEffect 1 -- runs, result discarded

x <- sideEffect 5 -- runs, x = 10

let y = add x 1 -- y = 11, no run

z <- t -- NOW t runs; z = 6

add y z -- returns 17Both layout-indented form (as above) and brace form

(do { x ← e; y ← f; … }) are accepted.

When do is needed and when it isn’t

A do-block is not always required. A single effectful expression stands on

its own:

forceOnce :: <IO> Int

forceOnce = sideEffect 5Use a do-block when you need to sequence multiple statements, bind

intermediate results, or run a suspension for its effects only. A do-block

is itself an expression, so it can appear as an argument:

handle (do

v <- foo x

v)4.13.4. The rules

The whole type-checking story for effects is four rules.

-

Empty rows vanish.

<> T == T. A pureTsatisfies any<E> Tslot; the empty row is the subset of every row. -

More effects are a supertype of fewer.

<E1> T <: <E2> Texactly when the concrete labels ofE1are a subset ofE2. A<IO> Intis usable where<IO, Error> Intis expected; the reverse is not. -

Effects don’t leak silently. A value of type

<E> Twith non-emptyEcannot be assigned to a slot of typeT, nor to a bare type variable with no effect annotation. If you intend the effect to escape, you say so in the type. -

A

do-block collects. Its row is the union of its statements' rows; its type is<that-union> T, whereTis the type of its final statement.

A few illustrations:

-- Rule 1: pure is a subtype of <IO>

pureFortyTwo :: <IO> Int

pureFortyTwo = 42 -- OK

-- Rule 2: widening is fine

ioFunc :: <IO> Int

testSubtype :: <IO, Error> Int

testSubtype = do

x <- ioFunc

x -- OK: <IO> <: <IO, Error>

-- Rule 2: narrowing is rejected

rint :: <IO, Error> Int

a :: <IO> Int

a = rint -- ERROR: Error not in <IO>

-- Rule 3: effects can't be dropped into a pure slot

rint :: <IO> Int

b :: Int

b = rint -- ERROR: <IO> Int is not Int

-- Rule 4: the union of statements' effects

readValue :: <IO> Int

riskyDouble :: Int -> <Error> Int

combined :: <IO, Error> Int

combined = do

x <- readValue -- contributes <IO>

y <- riskyDouble x -- contributes <Error>

yThere is one more guarantee the user sees but does not write down: an

exported <E> T is forced automatically at the boundary. The compiled

program’s user receives a T. Effects do not escape the binary.

4.13.5. Effect row variables

Combinators that thread effects need to be able to talk about sets of unknown effects. For that, an effect row may include a single lowercase variable that represents a set of zero or more unknown effects:

The function mapE, below, carries the all the effects of the mapping function

to the final value:

mapE :: (a -> <e> b) -> [a] -> <e> [b]Effect variables and constants may be mixed, but there may be no more than one effect variable associated with a given term.

In the following code, the signature states the requirement that function f

produce a random effect, but allows other effects to be produced as well.

foo :: (Int -> <Rand,e> Int) -> <Rand,e> Int

foo f x = do